Before we can create a user experience, we need to explore the feasibility of our concept. Our first step was to look at the available book data.

In our representation, we ultimately chose to use Library of Congress subject headings and genres, along with book length in pages, as attributes. Each attribute is a dimension with values ranging from zero to a weight determined by inverse document frequency. The value of a given dimension, if the corresponding attribute is present for a given book, is that IDF weight divided by the number of attributes that book has.

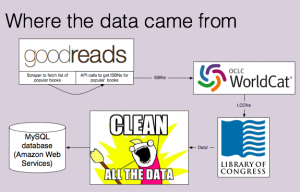

Acquiring data

It would be impractical to attempt to populate our database with all of the books on Goodreads. We elected to use a user-curated list on Goodreads titled “Best Books Ever’’ to  select the books we would put in our database. Using Beautiful Soup, we scraped the title and author, which we then sent to the Goodreads API in order to fetch the ISBN.

select the books we would put in our database. Using Beautiful Soup, we scraped the title and author, which we then sent to the Goodreads API in order to fetch the ISBN.

These ISBNs were then sent to the Worldcat xISBN interface, which allows 1000 free calls per day. Worldcat returned the LCCN number, which we could then use to fetch the Library of Congress subjects, genres, and page length via the LOC’s permalink XML service.

At each step in this process, there was some attrition. That is, for some books, Goodreads did not return an ISBN. For others, Worldcat did not return an LCCN. For others, the LCCN was invalid, or the LOC did not have any subject information for that book. This process yielded valid data for a little more than 50% of the books we attempted to process, and because of the Worldcat limit on calls per day, we ended up with a total of 2,165 books in our database.

Cleaning data

The data required extensive cleaning, despite the source. In some cases, strings that should have been in separate XML tags were lumped together. In other cases, nearly identical terms were used, or data was entered in a different style. We were able to clean much of this data, but in some cases, there was no clear way to clean the data programmatically, and in other cases, we lack the expertise to determine if subjects could be combined without losing fine shadings of meaning.

We also removed all attributes that only described a single book because they would disproportionately affect distances, making the space more sparse without adding useful information.

Calculated data

There is a power law distribution of attribute–book relationships. That is, there are only a few attributes that are commonly used across many books, and many attributes that describe only a few books. To compensate, we used a $\log$ transformation, expanding the range of IDF values.

The weight $w_i$ (i.e., maximum value) of each attribute $i$ is determined by an equation based on inverse document frequency: $w_i = -\log_{10}\left(1-0.9\frac{n_i}{N}\right)$, where $n_i$ is the number of books with attribute $i$ and $N$ is the total number of books.

Then, let book $A$ and book $B$ be books wherein $A$ has $n$ attributes and length $l_B$, and $B$ has $m$ attributes and length $l_B$. For $X = attr(A,B)$ (the combined attributes of both books), each with weight $w_i$ (as calculated above),

$$d^2(A,B) = (l_A – l_B)^2 + \sum_{i \cup attrs(A,B)} \begin{cases}

\left(\frac{n-m}{nm}\right)^2w_i^2 \textrm{ if } x_i \textrm{ in } A, B\\

\left(\frac{w_i}{n}\right)^2 \textrm{ if } x_i \textrm{ only in } A\\

\left(\frac{w_i}{m}\right)^2 \textrm{ if } x_i \textrm{ only in } B

\end{cases}$$

This simplifies significantly when we expand it:

$$d^2(A,B) = \left(\frac{n-m}{nm}\right)^2\sum_{attrs(A,B)} w_i^2 + \frac{1}{n^2}\sum_{attrs(A!B)} w_i^2 + \frac{1}{m^2}\sum_{attrs(B!A)} w_i^2 + (l_A – l_B)^2$$

We pre-calculated the distances for every pair of books in the database. For 2,165 books, that gave us a total of $N_{\textrm{books}}^2/2 = 2.3\times10^6$ distance calculations, which we added to an indexed table in our database. This enables us to search for books sorted by distance, which is an efficient way to fetch a book’s nearest neighbors.