To evaluate mBG’s suggestions, we manually classified the entire database based on the concept `Victorian literature’. We found 49 books whose authors are broadly considered to be Victorian-era British novelists, which we coded as positive. Books that are very similar to, but not technically belonging to this concept, were coded as neutral, because we expect users to have trouble classifying them. All remaining books were coded as negative.

We then created a user simulator. The user simulator, as the name implies, simulates the behavior of a user who submits a shelf to mBG, receives suggestions, gives feedback, and eventually exits the application when bored or satisfied. With a user simulator, we can perform multiple test runs and compare the results with confidence that user inconsistency is not causing variation from run to run.

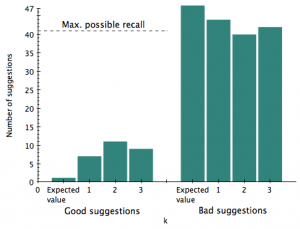

We systematically tested mBG for several values of $k$, using our shelf  and simulator. For comparison, we devised a baseline algorithm that samples books with the attribute that is most frequent among books on the shelf. But over 50 trials, this algorithm yielded no good suggestions. So instead, we compared our performance with the expected value of a random draw of size $k$.

and simulator. For comparison, we devised a baseline algorithm that samples books with the attribute that is most frequent among books on the shelf. But over 50 trials, this algorithm yielded no good suggestions. So instead, we compared our performance with the expected value of a random draw of size $k$.

As you can see, for $k$ = 1, 2, and 3, mBG makes better suggestions than a baseline of suggesting a book at random, but still achieves low recall and low precision. Out of 41 possible books to correctly suggest, mBG never suggested more than 11. Conversely, mBG made many incorrect suggestions. This is likely a result of the small size of the positives relative to the data set as a whole. We discuss strategies for compensating for this in Future Work.